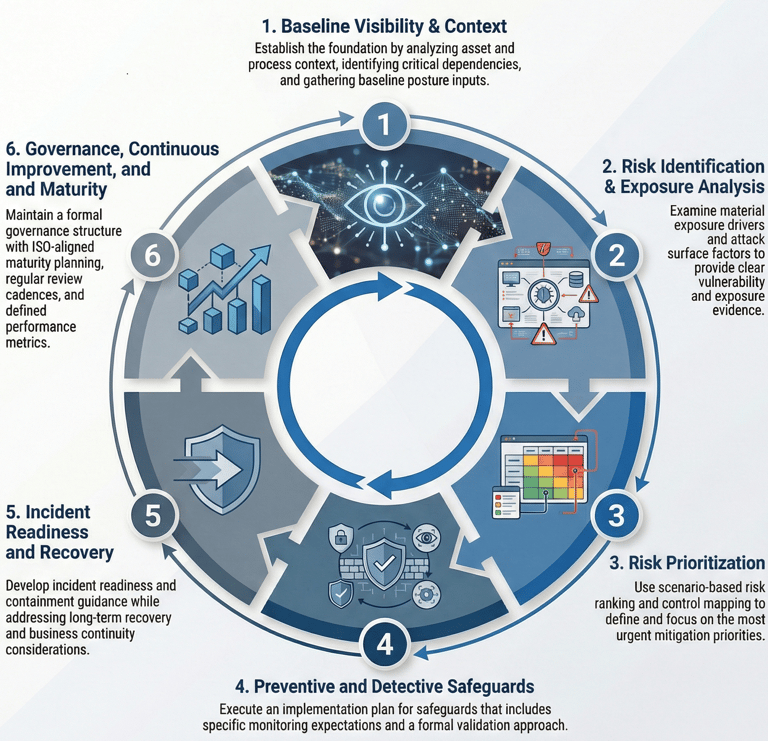

THE RISKAXIS PREDICTIVE CYBERSECURITY FRAMEWORK

Widely recognized cybersecurity standards and best-practice models are embedded within the framework’s internal logic and mappings to support consistency and practical adoption, without positioning certification as a requirement.

The RiskAxis Predictive Cybersecurity Framework is a structured, risk-oriented methodology designed to help organizations assess their current cybersecurity posture, identify meaningful exposure, and prioritize mitigation actions based on real-world exploitability and operational impact.

The framework is intentionally non-certifying and adaptable. It does not require organizations to adopt a specific vendor, software platform, or formal compliance program. Instead, it provides a consistent analytical structure that can be applied across diverse organizational environments.

Framework Structure

The framework is organized around a six-pillar lifecycle:

Baseline Visibility & Context

Risk Identification & Exposure Analysis

Risk Prioritization

Preventive and Detective Safeguards

Incident Readiness and Recovery

Governance, Continuous Improvement, and Maturity

Each pillar produces standardized outputs designed to support traceability, governance, and informed decision-making.

RiskAxis AI Governance & Security Module

As artificial intelligence tools become embedded in operational workflows, data processing, automation systems, and decision-support environments, organizations face emerging categories of risk that extend beyond traditional cybersecurity controls. These risks include data misuse, model manipulation, automation errors, governance gaps, and systemic operational dependencies.

The RiskAxis AI Governance & Security Module was developed as a structured extension of the RiskAxis Predictive Cybersecurity Framework to address these evolving exposure domains through a risk-based analytical methodology rather than checklist-based compliance.

The module does not provide certifications or audit determinations. Instead, it supports informed, repeatable, and accountable decision-making aligned with responsible AI governance and organizational resilience.

What the Module Supports

The module enables organizations to:

Establish a structured current-state profile of AI-enabled systems and use cases

Identify material exposure drivers related to AI data usage, model behavior, and integration points

Derive plausible risk scenarios tied to real-world operational impact

Generate prioritized mitigation strategies and governance recommendations

Develop an AI governance maturity roadmap aligned with organizational risk tolerance

The analytical process supports repeatable decision-making and structured risk evaluation. It does not provide audit opinions, regulatory certifications, or pass/fail determinations.

Designed for Diverse Organizational Contexts

The module is applicable to:

Small and medium-sized enterprises beginning AI adoption

Organizations integrating third-party AI services

Enterprises deploying internal machine learning models

Public-sector entities implementing AI-driven automation

The methodology is scalable and sector-aware, without being limited to a single regulatory regime.

Public Interest and Systemic Relevance

AI systems increasingly influence sectors such as healthcare, finance, energy, logistics, and public administration. Weak governance or unmanaged AI-related risk can produce cascading operational, financial, and reputational consequences across interconnected ecosystems.

By enabling structured visibility into AI-related risk drivers and governance maturity gaps, the module contributes to:

Strengthening organizational resilience

Supporting responsible AI adoption

Reducing systemic cybersecurity exposure

Promoting evidence-informed governance practices

This approach aligns with the broader public-interest objective of enhancing cybersecurity maturity across organizations that may lack internal AI governance expertise.